Blog Options

Archive

<< April 2024 >>-

Wednesday 24

- Expanding the StellarDS.io tooling -

Monday 22

- Easy Guide to Building a Pexels Gallery App with TMS WEB Core -

Wednesday 17

- New free component for sophisticated file uploads with TMS WEB Core -

Tuesday 16

- Closing the gap with TMS FNC UI Pack new additions -

Thursday 11

- Unveiling the Latest Enhancements in TMS VCL UI Pack -

Friday 5

- TMS components and Delphi and C++Builder 12.1 -

Wednesday 3

- Customizing the login page of your application using TMS Sphinx

- A Python library for using StellarDS.io effortlessly

- Introducing: How it Works with Holger - Building a Pexels gallery app with TMS WEB Core video series

- TMS FNC Cloud Pack with StellarDS.io backend: unparalleled productivity in VCL & FMX

Authors

- Bernard Roussely (1)

- Wagner Landgraf (82)

- Roman Yankovsky (2)

- Bart Holvoet (27)

- Aaron Decramer (18)

- Pieter Scheldeman (99)

- Nancy Lescouhier (32)

- Adrian Gallero (33)

- Bruno Fierens (404)

- Marcos Douglas B. Santos (5)

- Wagner R. Landgraf (1)

- Bradley Velghe (16)

- Bernard (2)

- Andrew Simard (86)

- Holger Flick (15)

- Gjalt Vanhouwaert (30)

- Tunde Keller (22)

- Masiha Zemarai (119)

Blog

All Blog Posts | Next Post | Previous Post

Delphi and iPhone helping vision impaired people

Delphi and iPhone helping vision impaired people

Bookmarks:

Friday, September 30, 2016

We released earlier this week a major update of the TMS FMX Cloud Pack. This new version adds a lot of new components covering seamless access to all kinds of interesting cloud services. Among the new services covered, two services from Microsoft stand out and open up new ways to enrich our Delphi applications with cool features. In this blog, I wanted to present the Microsoft Computer Vision and Microsoft Bing speech service. Our new components TTMSFMXCloudMSComputerVision and TTMSFMXCloudMSBingSpeech offer instant and dead-easy access to these services. Powered with these components, the idea came up to create a small iPhone app that let's vision impaired people take a picture of their environment or a document and have the Microsoft services analyze the picture taken and let Microsoft Bing speech read the result.So, roll up your sleeves and in 15 minutes you can assemble this cool iPhone app powered with Delphi 10.1 Berlin and the TMS FMX Cloud Pack!

To get started, the code is added to allow taking pictures from the iPhone. This is a snippet of code that comes right from the Delphi docs. From a button's OnClick event, the camera is started:

if TPlatformServices.Current.SupportsPlatformService(IFMXCameraService,

Service) then

begin

Params.Editable := True;

// Specifies whether to save a picture to device Photo Library

Params.NeedSaveToAlbum := false;

Params.RequiredResolution := TSize.Create(640, 640);

Params.OnDidFinishTaking := DoDidFinish;

Service.TakePhoto(Button1, Params);

end

procedure TForm1.FormShow(Sender: TObject); begin TMSFMXCloudMSBingSpeech1.App.Key := MSBingSpeechAppkey; TMSFMXCLoudMSComputerVision1.App.Key := MSComputerVisionAppkey; end;

So, a TTask is used to start this analysis with the call TMSFMXCLoudMSComputerVision1.ProcessFile(s, cv). A TTask is used to avoid that the UI is locked during this analysis, after-all, the image must be submitted to Microsoft, processed and the result returned and parsed, so this can take 1 or 2 seconds. Depending on the analysis type, the result is captured as text in a memo control. After this, we connect to the Bing speech service.

procedure TForm1.DoDidFinish(Image: TBitmap);

var

aTask: ITask;

s: string;

cv: TMSComputerVisionType;

begin

CaptureImage.Bitmap.Assign(Image);

// take local copy of the file for processing

s := TPath.GetDocumentsPath + PathDelim + 'photo.jpg';

Image.SaveToFile(s);

// asynchronously start image analysis

aTask := TTask.Create (procedure ()

var

i: integer;

begin

if btnAn0.IsChecked then

cv := ctAnalysis;

if btnAn1.IsChecked then

cv := ctOCR;

if TMSFMXCLoudMSComputerVision1.ProcessFile(s, cv) then

begin

Description := '';

if cv = ctAnalysis then

begin

// concatenate the image description returned from Microsoft Computer Vision API

for I := 0 to TMSFMXCLoudMSComputerVision1.Analysis.Descriptions.Count - 1 do

begin

Description := Description + TMSFMXCLoudMSComputerVision1.Analysis.Descriptions[I] + #13#10;

end;

end

else

begin

Description := TMSFMXCLoudMSComputerVision1.OCR.Text.Text;

end;

// update UI in main UI thread

TThread.Queue(TThread.CurrentThread,

procedure ()

begin

if Assigned(AnalysisResult) then

AnalysisResult.Lines.Text := Description;

end

);

TMSFMXCloudMSBingSpeech1.Connect;

end

else

begin

// update UI in main UI thread

TThread.Queue(TThread.CurrentThread,

procedure ()

begin

AnalysisResult.Lines.Add('Sorry, could not process image.');

end

);

end;

end

);

aTask.Start;

end;

procedure TForm1.TMSFMXCloudMSBingSpeech1Connected(Sender: TObject);

var

st: TMemoryStream;

s: string;

begin

st := TMemoryStream.Create;

s := AnalysisResult.Lines.Text;

try

TMSFMXCloudMSBingSpeech1.Synthesize(s, st);

TMSFMXCloudMSBingSpeech1.PlaySound(st);

finally

st.Free;

end;

end;Now, let's try out the app in the real world. Here are a few examples we tested.

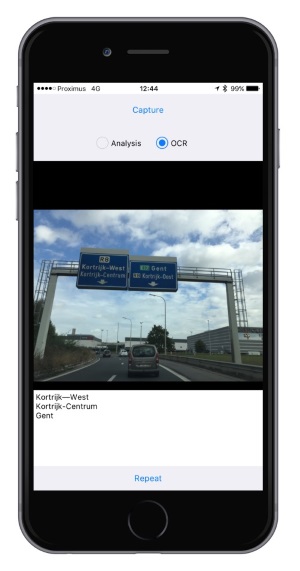

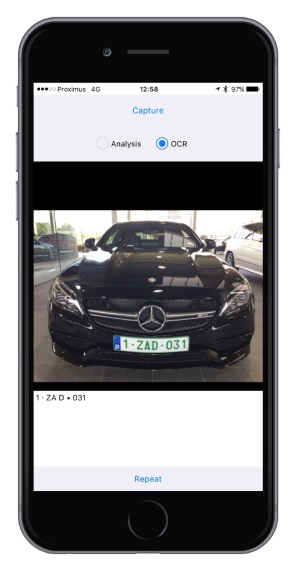

Using the app on the road to read road signs and capture car license plates

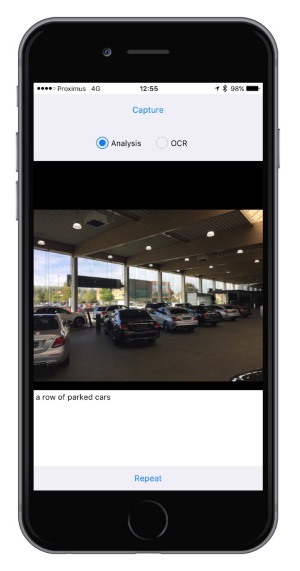

Trying to figure out what we see in a showroom

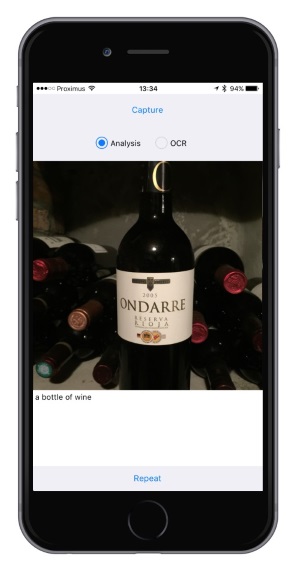

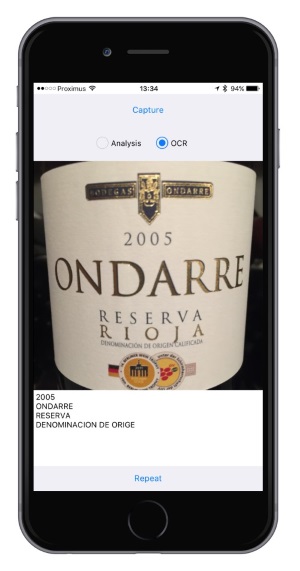

First the app analyzed correctly this is a bottle of wine in the cellar and is then pretty good at reading the wine bottle label.

You can download the full source code of the app here and have fun discovering these new capabilities.

Enjoy!

Bruno Fierens

Bookmarks:

This blog post has received 6 comments.

2. Friday, September 30, 2016 at 4:49:38 PM

1) Did you obtain a proper API key for Bing speech?

2. Friday, September 30, 2016 at 4:49:38 PM

1) Did you obtain a proper API key for Bing speech?2) We tested this sample for iPhone but I expect this to work for Android as well. I don''t see much reasons it wouldn''t work for Android in fact, but I haven''t tested it here so far on Android.

Bruno Fierens

3. Saturday, October 1, 2016 at 12:03:34 PM

Hi Bruno,

3. Saturday, October 1, 2016 at 12:03:34 PM

Hi Bruno,1) yes sure!

2) same code not works

Antonello

Carlomagno Antonello

4. Saturday, October 1, 2016 at 12:47:07 PM

What exact issue on what exact Android version with what exact Delphi version?

4. Saturday, October 1, 2016 at 12:47:07 PM

What exact issue on what exact Android version with what exact Delphi version?We got meanwhile confirmation from other users the code works also on Android, so, there must be something specific when your IDE / Android then I guess.

Bruno Fierens

5. Saturday, July 29, 2017 at 5:01:09 PM

no;

5. Saturday, July 29, 2017 at 5:01:09 PM

no;

rekan.jalal19@gmail.com

6. Wednesday, June 6, 2018 at 5:24:05 AM

I am trying to run the example, but have the feeling that Microsoft introduced new APIs so maybe it is not working anymore. Or maybe the keys are get from Microsoft are for the new v2.0 API. Can you please confirm that this example is working and if so, how exactly the properties of the controls are set. Thank you very much in advance.

6. Wednesday, June 6, 2018 at 5:24:05 AM

I am trying to run the example, but have the feeling that Microsoft introduced new APIs so maybe it is not working anymore. Or maybe the keys are get from Microsoft are for the new v2.0 API. Can you please confirm that this example is working and if so, how exactly the properties of the controls are set. Thank you very much in advance.

Stoyan

All Blog Posts | Next Post | Previous Post

I have 2 questions:

1 - The bing speech return a "access denied" error

2 - Why only iphone works?

thanks

antonello

Carlomagno Antonello